Hey Folks,

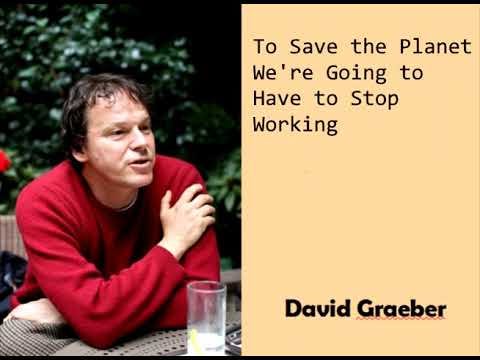

David Graeber, the greatest anthropologist of our age, died four years ago.

To honour his legacy, I’ve been posting some “bite-sized Graeber” in the hopes to inspire people to explore his important, brilliant, and prescient work.

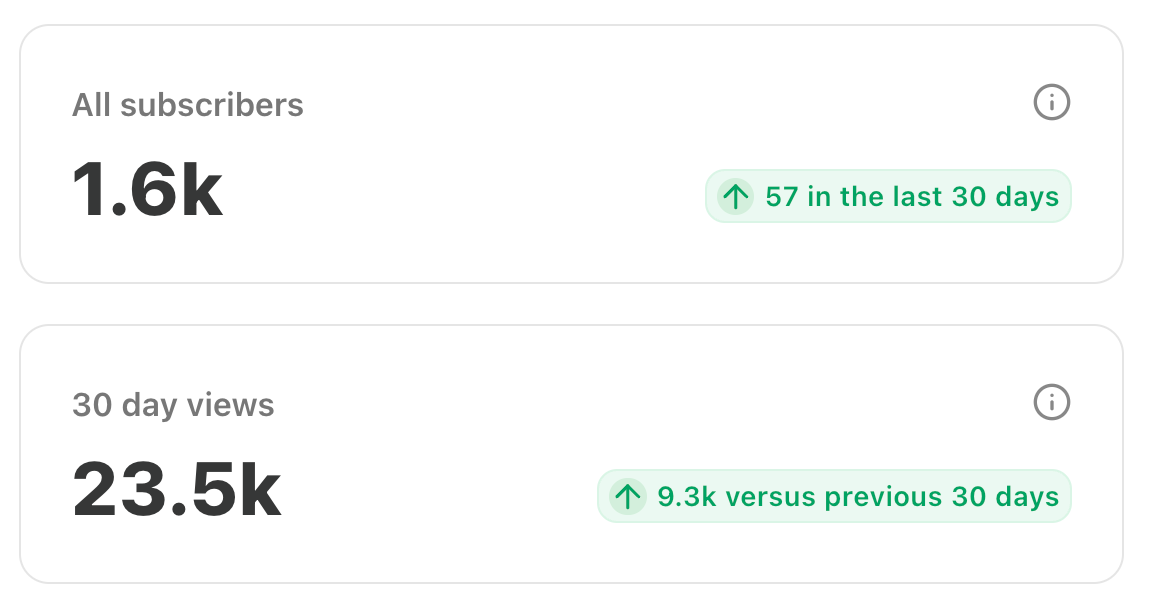

I’ve received quite a lot of positive feedback on this series, and it looks like we might be on our way to hitting a new milestone.

So, I’ve decided that I’m going to make it part of my thing to post selections from Graeber’s work. And if anyone wants to call me a Graeberite, by all means, feel free.

Today I’d like to address some critiques of Graeber. As O.G. Nevermorons will be aware, I’ve made a running joke out of my beef with Darren Allen, one of the world’s leading green anarchist theorists.

If you’ve been in a coma for the past five years or so, Darren Allen is a leading proponent of Primalism, which is way cooler than primitivism.

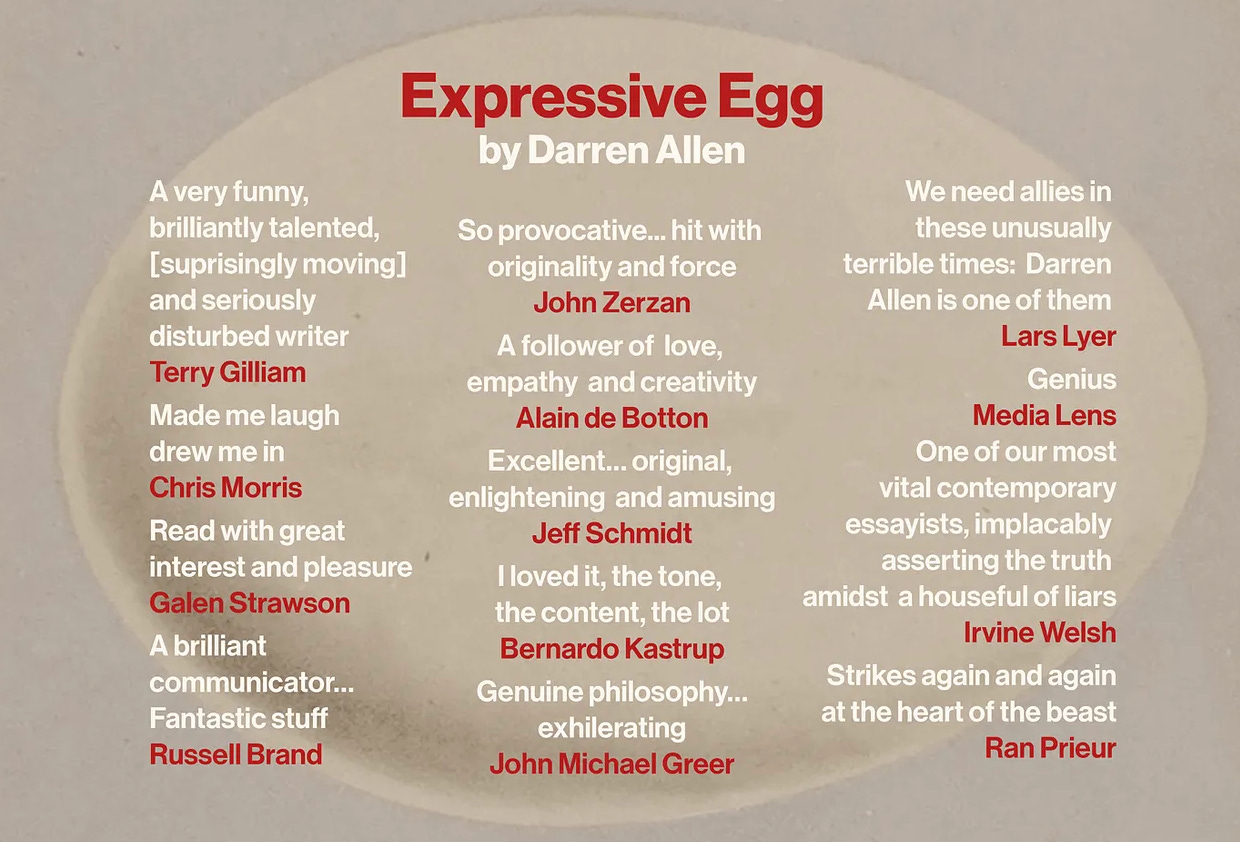

Darren Allen also has a way cooler collection of blurbs than any of the rest of us.

If you want to start exploring his work, you can start here:

Given that my politics are VERY similar to those of Allen’s, my jokes about him might have left some of you scratching their heads.

But it all goes back to the harsh words that Darren Allen had for David Graeber after his death.

After Graeber died suddenly in the midst of the biggest psy op in recorded history, Darren Allen had this to say:

David Graeber was, like Noam Chomsky, a rationalist, democratic, technophilic socialist who appropriated the radicalism of anarchism in order to distance himself from the conspicious futility of the professional leftism he embodied. He uncritically supported democracy (and was unable or unwilling to accept that it tyrannously subordinates individuals), he was uncritical of standard leftist causes (such as anti-racism, and other tools of management), he had no real interest in genuine anarchist revolt (continually focusing his ‘activism’ through statist party politics), he was uncritical of professionalism (firing potshots at every job conceivable in his book Bullshit Jobs, yet curiously reluctant to attack doctors, teachers, lawyers and so on1), he was uncritical of technology (lamenting that we’re not technologically advanced enough2) and when push came to shove and state-imposed lockdowns inaugurated a new dawn of subjugation and control, he was strangely silent3.

Now, some of this is true - he was a professional leftist and he was uncritical of anti-racism - but much of it is simply false. David Graeber was a true revolutionary who practiced what he preached, as anyone who has read Direct Action or The Democracy Project will be well aware.

This really ground my gears, because I believe that David Graeber was most likely assassinated. By no means do I agree with every word that Graeber ever wrote, but I am of the utmost conviction that we should immortalize David Graeber as an anarchist martyr and saint.

That said, I am also of the opinion that “to the dead you owe only truth”. Although I regard David Graeber as the most important thinker of the 21st century so far, I know he would want for his ideas to be discussed and debated in true intellectual fashion, which is ruthless.

So, today I’d like to respond to one part of Darren Allen’s critique - that David Graeber was a technophile who dreamt of flying cars.

For those who are unaware, the schism between leftist anarchism (a.k.a. red anarchism) and post-leftist anarchism (a.k.a. green anarchism), has everything to do with 1) technology, and 2) identity politics.

Uncle Ted really did deal a death blow to Leftist anarchism back in the nineties, but not everyone has gotten the memo yet.

WAS DAVID GRAEBER WAS A TECHNOPHILE?

David Graeber was indeed a leftist, I won’t argue about that.

But I think that his views on technology have been misinterpreted by some. David Graeber wore at least three hats. As an anarchist, he was an ideologue. As an activist, he was a pragmatist. And as an anthropologist, he did his best to be an impartial observer of modern society. I believe this explains most of the perceived inconsistencies which Darren Allen critiques.

For that reason, I am posting Graeber’s very interesting essay Flying Cars and the Declining Rate of Profit, which is where the “flying cars” meme comes from. In this essay, Graeber is clearly writing as an anthropologist studying his own society’s imaginary, which I find fascinating.

Is it the most anarchist thing ever written? No. It’s not about ideology. It’s about imagination, and our perceptions about what is possible.

David Graeber described himself as “a professional optimist”, and clearly wanted to be a techno-optimist. But he was deeply disappointed with the failure of technology to live up to its hype.

So was he a technophile? Yes and no, I guess. But like all truly great thinkers, he never fit neatly into any one box.

Flying Cars and the Declining Rate of Profit is excerpted from The Utopia of Rules, which I highly recommend.

I’m also pleased to announce that Darren Allen has a new book out, called The Fire Sermon, which sounds pretty lit.

Dang. This Darren Allen guy really knows how to make you want something, doesn’t he?

Will it live up to the hype? We’ll see!

Keep your eyes out for Paul Cudenec’s review, coming soon!

Anything, my main point in writing this is to say the Great David Graeber Debate is far from over. Now that he’s passed on, the important thing is to make the most of the intellectual legacy he bequeathed to us.

Also, someone really needs to write a song about our martyred comrade! What the fuck, people!

Here’s one for inspiration:

I have no doubt that I’ll be talking about his ideas for the rest of my life, and in my humble opinion, no one who takes a serious interest in anarchist theory can afford to neglect his magisterial oeuvre.

Pro tip for newbs: Start with Fragments of an Anarchist Anthropology.

Okay, I’ll sign off for now. Stay tuned for more tasty morsels of Bite-Sized Graeber!

for the wild,

Crow Qu’appelle

Of Flying Cars and the Declining Rate of Profit

David Graeber, 2012

A secret question hovers over us—a sense of disappointment, a broken promise we were given as children about what our adult world was supposed to be like. I am not referring to the standard false promises that children are always given (about how the world is fair, or how those who work hard shall be rewarded), but to a specific generational promise given to those who were children in the fifties, sixties, seventies, or eighties. This promise was never quite articulated but existed as a set of assumptions about what our adult world would be like. Since it was never fully expressed, now that it has failed to come true, we’re left confused—indignant but embarrassed by our own indignation, ashamed that we were ever so naïve to believe our elders.

Where, in short, are the flying cars? Where are the force fields, tractor beams, teleportation pods, antigravity sleds, tricorders, immortality drugs, colonies on Mars, and all the other technological wonders any child growing up in the mid-to-late twentieth century assumed would exist by now? Even those inventions that seemed ready to emerge—like cloning or cryogenics—ended up betraying their lofty promises. What happened to them?

Even those inventions that seemed ready to emerge—like cloning or cryogenics—ended up betraying their lofty promises. What happened to them?

We are well informed about the wonders of computers, as if this is some sort of unanticipated compensation, but in fact, we haven’t progressed in computing to the extent people in the fifties expected. We don’t have computers with which we can have interesting conversations, nor robots that can walk our dogs or take our clothes to the laundromat.

As someone who was eight years old at the time of the Apollo moon landing, I remember calculating that I would be thirty-nine in the magic year 2000 and wondering what the world would be like. Did I expect to be living in such a world of wonders? Of course. Everyone did. Do I feel cheated now? It seemed unlikely that I would live to see all the things I read about in science fiction, but it never occurred to me that I wouldn’t see any of them.

At the turn of the millennium, I was expecting an outpouring of reflections on why we had gotten the future of technology so wrong. Instead, just about all the authoritative voices—both Left and Right—began their reflections from the assumption that we do live in an unprecedented new technological utopia of one sort or another.

The common way of dealing with the uneasy sense that this might not be so is to brush it aside, to insist that all the progress that could have happened has already happened, and to treat any further expectations as silly. “Oh, you mean all that Jetsons stuff?” I’m asked—as if to say, But that was just for children! Surely, as grown-ups, we understand The Jetsons offered as accurate a view of the future as The Flintstones did of the Stone Age.

Even in the seventies and eighties, sober sources such as National Geographic and the Smithsonian were informing children about imminent space stations and expeditions to Mars. Creators of science fiction movies used to set concrete dates, often no more than a generation in the future, in which to place their futuristic fantasies. In 1968, Stanley Kubrick felt that a movie-going audience would find it perfectly natural to assume that only thirty-three years later, in 2001, we would have commercial moon flights, city-like space stations, and computers with human personalities maintaining astronauts in suspended animation while traveling to Jupiter. Video telephony is just about the only new technology from that movie that has appeared—and it was technically possible even when the movie was showing.

2001 can be seen as a curio, but what about Star Trek? The Star Trek mythos was set in the sixties too, but the show kept getting revived, leaving audiences for Star Trek: Voyager in, say, 2005, to try to figure out what to make of the fact that, according to the program’s logic, the world was supposed to be recovering from fighting off the rule of genetically engineered supermen in the Eugenics Wars of the nineties.

By 1989, when the creators of Back to the Future II dutifully placed flying cars and anti-gravity hoverboards in the hands of ordinary teenagers in the year 2015, it wasn’t clear if this was meant as a prediction or a joke.

The usual move in science fiction is to remain vague about the dates, rendering “the future” a zone of pure fantasy, no different from Middle Earth or Narnia, or like Star Wars’ “a long time ago in a galaxy far, far away.” As a result, our science fiction future is, most often, not a future at all, but more like an alternative dimension—a dream-time, a technological Elsewhere existing in days to come, much like elves and dragon-slayers existed in the past. Another screen for the displacement of moral dramas and mythic fantasies into the dead ends of consumer pleasure.

Might the cultural sensibility that came to be referred to as postmodernism best be seen as a prolonged meditation on all the technological changes that never happened? The question struck me as I watched one of the recent Star Wars movies. The movie was terrible, but I couldn’t help but feel impressed by the quality of the special effects. Recalling the clumsy special effects typical of fifties sci-fi films, I kept thinking how impressed a fifties audience would have been if they’d known what we could do by now—only to realize, “Actually, no. They wouldn’t be impressed at all, would they? They thought we’d be doing this kind of thing by now. Not just figuring out more sophisticated ways to simulate it.”

That last word—simulate—is key. The technologies that have advanced since the seventies are mainly either medical technologies or information technologies—largely, technologies of simulation. These are what Jean Baudrillard and Umberto Eco called the “hyper-real,” the ability to make imitations more realistic than the originals. The postmodern sensibility—the feeling that we had somehow entered an unprecedented historical period in which we understood there was nothing new, that grand historical narratives of progress and liberation were meaningless, that everything now was simulation, ironic repetition, fragmentation, and pastiche—makes sense in a technological environment where the only breakthroughs were those that made it easier to create, transfer, and rearrange virtual projections of things that either already existed or, we came to realize, never would.

Surely, if we were vacationing in geodesic domes on Mars or carrying pocket-size nuclear fusion plants or telekinetic mind-reading devices, no one would have been talking like this. The postmodern moment was a desperate way to dress up what could otherwise only be felt as bitter disappointment as something epochal, exciting, and new.

In the earliest formulations, largely from the Marxist tradition, much of the technological background was acknowledged. Fredric Jameson’s Postmodernism, or the Cultural Logic of Late Capitalism proposed the term “postmodernism” to describe the cultural logic suited to a new technological phase of capitalism, one that Marxist economist Ernest Mandel had heralded as early as 1972. Mandel argued that humanity was on the verge of a “third technological revolution” as profound as the Agricultural or Industrial Revolutions, where computers, robots, new energy sources, and new information technologies would replace industrial labor. This era was referred to as “the end of work,” where designers and computer technicians would craft the visions that cybernetic factories would produce.

End-of-work arguments were popular in the late 1970s and early 1980s as social thinkers pondered what would happen to the working-class-led popular struggle once the working class no longer existed. (The answer: it would turn into identity politics.) Jameson explored the forms of consciousness and historical sensibilities likely to emerge from this new age.

What happened instead was the spread of information technologies and new methods of organizing transportation—such as the containerization of shipping—which enabled the outsourcing of industrial jobs to East Asia, Latin America, and other regions where cheap labor allowed manufacturers to use less technologically advanced production techniques than they would have needed to employ at home.

For those living in Europe, North America, and Japan, the results seemed to align with predictions. Smokestack industries disappeared, and jobs became divided between a lower stratum of service workers and an upper stratum of white-collar workers in antiseptic bubbles, interacting with computers. However, beneath this post-work civilization lay the unsettling truth that it was a fraud. High-tech sneakers, for instance, were not being produced by intelligent cyborgs or self-replicating nanotechnology; instead, they were made on old-fashioned Singer sewing machines by the daughters of Mexican and Indonesian farmers, who, due to WTO or NAFTA-sponsored trade deals, had been displaced from their ancestral lands. This guilty awareness underpinned the postmodern sensibility and its celebration of images and surfaces.

Why did the anticipated explosion of technological growth—the moon bases, robot factories—fail to materialize? There are two possibilities. Either our expectations about the pace of technological change were unrealistic (in which case, we need to understand why so many intelligent people believed they weren’t), or those expectations were realistic (in which case, we need to understand what happened to derail credible ideas and prospects).

Most social analysts choose the first explanation, often tracing the issue to the Cold War space race. They ask why both the United States and the Soviet Union became so obsessed with manned space travel, which was never an efficient way to conduct scientific research and encouraged unrealistic ideas about the human future.

Could it be that both the U.S. and the Soviet Union, as societies of pioneers—one expanding across the Western frontier, the other across Siberia—shared a commitment to the myth of a limitless, expansive future? This idea of human colonization of vast, empty spaces may have convinced both superpowers’ leaders that they had entered a "space age" where they were battling for control of the future. Certainly, many myths were at play, but that doesn’t determine the feasibility of such projects.

Some science fiction fantasies might have been realized. Earlier generations saw many of their science fiction fantasies come to life. Those who grew up around the turn of the century reading Jules Verne or H.G. Wells envisioned a world of flying machines, rocket ships, submarines, radio, and television by 1960—and that’s largely what they got. If it wasn’t unrealistic in 1900 to dream of men traveling to the moon, why was it unrealistic in the 1960s to dream of jet-packs and robot maids?

In fact, even as these dreams were being articulated, the material base for their realization was already beginning to erode. There is reason to believe that by the 1950s and 60s, the pace of technological innovation was already slowing from the heady pace of the first half of the century. There was a final burst in the 1950s when microwave ovens (1954), the Pill (1957), and lasers (1958) appeared in quick succession. But since then, technological advances have mostly taken the form of clever new ways to combine existing technologies (as seen in the space race) and new methods of applying existing technologies to consumer use (such as television, which was invented in 1926 but only mass-produced after the war).

Yet the space race gave the impression that remarkable advances were occurring, and the popular perception in the 1960s was that the pace of technological change was speeding up in terrifying, uncontrollable ways.

Alvin Toffler's 1970 bestseller Future Shock argued that nearly all the social problems of the 1960s could be traced back to the increasing pace of technological change. The endless stream of scientific breakthroughs was transforming daily life, leaving Americans without any clear idea of what constituted "normal" life. Consider the family, where not only the introduction of the Pill but also the looming prospects of in vitro fertilization, test-tube babies, and sperm and egg donation were poised to make the very concept of motherhood obsolete.

Humans were not psychologically prepared for the pace of change, Toffler claimed. He coined a term for this phenomenon: "accelerative thrust." This trend, he argued, had begun with the Industrial Revolution, and by around 1850, it had become unmistakable. Not only was everything changing, but most things—human knowledge, population size, industrial growth, and energy consumption—were changing exponentially. Toffler suggested that the only solution was to impose some form of control over this process by creating institutions that could assess emerging technologies and their likely effects, ban those deemed too socially disruptive, and guide development toward social harmony.

While many of the historical trends Toffler described were accurate, his book was published just as many of these exponential trends were beginning to halt. Around 1970, the rate at which scientific papers were published, a figure that had doubled roughly every fifteen years since 1685, began to level off. The same trend occurred with books and patents.

Toffler’s focus on acceleration was particularly unfortunate in the context of human travel speeds. For most of human history, the top speed at which people could travel was around 25 miles per hour. By 1900, this had increased to 100 miles per hour, and over the next seventy years, it appeared to increase exponentially. By 1970, when Toffler was writing, the record for the fastest speed any human had ever traveled was approximately 25,000 mph, set by the crew of Apollo 10 in 1969. At that rate, it must have seemed reasonable to assume that humanity would be exploring other solar systems within a few decades.

However, since 1970, there has been no further increase in travel speed. The Apollo 10 crew still holds the record for the fastest speed achieved by humans. While the Concorde, which first flew in 1969, reached a top speed of 1,400 mph, and the Soviet Tupolev Tu-144 hit an even faster 1,553 mph, these speeds have not only failed to increase, they have decreased since the Tu-144 was canceled and the Concorde was abandoned.

Despite these developments, Toffler’s career continued to thrive. He kept refining his analysis and making bold new predictions. In 1980, he published The Third Wave, borrowing from Ernest Mandel's "third technological revolution." While Mandel believed this new era would spell the end of capitalism, Toffler assumed capitalism was eternal. By 1990, Toffler had become the intellectual guru of Republican Congressman Newt Gingrich, who claimed that his 1994 Contract With America was partly inspired by Toffler's assertion that the United States needed to shift from an outdated, materialist, industrial mindset to a new, free-market, information-age, Third Wave civilization.

There are ironies in this connection. One of Toffler’s key achievements was inspiring the creation of the Office of Technology Assessment (OTA). Yet one of Gingrich’s first acts upon gaining control of the House of Representatives in 1995 was defunding the OTA, citing it as an example of government waste. Still, this was not entirely contradictory. By then, Toffler had moved away from influencing public policy directly and was making a living by giving seminars to CEOs and corporate think tanks. His insights had become privatized.

Gingrich also had a second guru, libertarian theologian George Gilder. Like Toffler, Gilder was obsessed with technology and social change, although he was more optimistic. Embracing a radical version of Mandel’s Third Wave argument, Gilder insisted that the rise of computers was leading to the "overthrow of matter." The old, materialist industrial society, where value came from physical labor, was giving way to an Information Age, where value emerged directly from the minds of entrepreneurs—much like the world had originally appeared ex nihilo from the mind of God. In a proper supply-side economy, Gilder argued, money would similarly emerge ex nihilo from the Federal Reserve and into the hands of value-creating capitalists. Supply-side economic policies, he concluded, would ensure that investment continued to flow away from old government boondoggles like the space program and toward more productive information and medical technologies.

However, if there was indeed a shift away from research into better rockets and robots, and toward technologies like laser printers and CAT scans, it began well before Toffler’s Future Shock (1970) or Gilder’s Wealth and Poverty (1981). Their success indicated that the issues they raised—namely, that existing technological development could lead to social upheaval and that technological progress needed to be guided in ways that did not challenge existing power structures—had long been resonating with policymakers and industrial leaders.

Industrial capitalism has fostered an extremely rapid rate of scientific and technological advancement—unparalleled in human history. Even capitalism’s fiercest critics, Karl Marx and Friedrich Engels, celebrated its ability to unleash "productive forces." Marx and Engels also believed that capitalism’s constant need to revolutionize industrial production would eventually lead to its downfall. Marx argued that value—and, by extension, profits—could only be derived from human labor. Competition forces factory owners to mechanize production and reduce labor costs, but while this benefits individual firms in the short term, mechanization ultimately drives down the general rate of profit.

For 150 years, economists have debated whether this is true. If it is, then the decision by industrialists in the 1960s not to invest in robot factories and instead relocate to labor-intensive, low-tech facilities in China and the Global South makes perfect sense.

As mentioned earlier, the pace of technological innovation in production processes—within the factories themselves—began to slow in the 1950s and 1960s. However, America's rivalry with the Soviet Union created the illusion that innovation was accelerating. The awe-inspiring space race, alongside U.S. industrial planners’ efforts to apply existing technologies to consumer products, fostered an optimistic sense of prosperity and guaranteed progress, which undercut the appeal of working-class politics.

These efforts were often reactive to Soviet initiatives. Yet, at the end of the Cold War, the popular American perception of the Soviet Union shifted from that of a bold and terrifying rival to a pitiful, dysfunctional system. In the 1950s, however, many U.S. planners feared that the Soviet system was actually more effective. They remembered that, during the 1930s, while the United States was mired in the Great Depression, the Soviet Union enjoyed unprecedented economic growth rates of 10-12% per year. This growth was followed by the production of tank armies that defeated Nazi Germany, the launch of Sputnik in 1957, and the first manned spacecraft, Vostok, in 1961.

It is often said that the Apollo moon landing was the greatest achievement of Soviet communism. The United States likely would not have pursued such a feat without the cosmic ambitions of the Soviet Politburo. While we tend to think of the Politburo as a group of unimaginative, gray bureaucrats, they were bureaucrats who dared to dream astounding dreams. The dream of world revolution was only their first. Most of their grandiose plans—such as changing the course of mighty rivers—either proved to be ecological and social disasters or, like Joseph Stalin’s 100-story Palace of the Soviets or a 20-story statue of Vladimir Lenin, never made it off the ground.

After the initial successes of the Soviet space program, few of their grand schemes were fully realized, but the leadership never stopped coming up with new ones. Even in the 1980s, while the United States was pursuing its own last grandiose project, Star Wars, the Soviets were still planning to transform the world through creative technological innovations. Few outside Russia remember most of these projects today, but substantial resources were devoted to them. Notably, unlike Star Wars, which was designed to sink the Soviet Union, many of these projects were not military in nature. For example, there were attempts to solve world hunger by harvesting lakes and oceans with edible bacteria called spirulina or to solve the energy crisis by launching hundreds of massive solar-power platforms into orbit and beaming electricity back to Earth.

The American victory in the space race meant that, after 1968, U.S. planners no longer took the competition seriously. As a result, the mythology of the final frontier was maintained, even as research and development shifted away from projects that could lead to Mars bases and robot factories.

The standard explanation is that this shift resulted from the triumph of the market. The Apollo program was a Big Government project, Soviet-inspired in its need for a national effort coordinated by government bureaucracies. Once the Soviet threat diminished, capitalism was supposedly free to return to its normal decentralized, free-market imperatives, focusing on privately funded research into marketable products like personal computers. This is the narrative promoted by thinkers like Alvin Toffler and George Gilder in the late 1970s and early 1980s.

In reality, the United States never abandoned large, government-controlled technological development. Instead, efforts shifted toward military research—not only Soviet-scale projects like Star Wars but also weapons development, communications, surveillance technologies, and other security-related concerns. In fact, missile research had always received far more funding than the space program. By the 1970s, even basic scientific research was increasingly directed by military priorities. One reason we don’t have robot factories today is that roughly 95% of robotics research funding has been funneled through the Pentagon, which is more interested in developing unmanned drones than automating factories.

A case could be made that the shift toward research in information technologies and medicine was less about market-driven consumer demand and more part of a larger effort to ensure U.S. victory in the global class war—through a combination of absolute U.S. military dominance abroad and the destruction of social movements at home.

The technologies that did emerge were most conducive to surveillance, work discipline, and social control. While computers have opened up certain freedoms, they have been employed in ways that counter Abbie Hoffman’s dream of a workless utopia. Instead, they have facilitated the financialization of capital, driving workers into debt and enabling employers to create "flexible" work regimes that destroy traditional job security and increase working hours for almost everyone. Along with the export of factory jobs, this new work regime has dismantled the union movement and eliminated the possibility of effective working-class politics.

Despite unprecedented investment in medical and life sciences research, we still await cures for diseases like cancer and the common cold. The most significant medical breakthroughs have come in the form of drugs like Prozac, Zoloft, and Ritalin—designed to keep people functional despite the demands of the new work regimes, ensuring that they don't fall into complete dysfunction.

Given these results, what will neoliberalism's epitaph look like? Historians may conclude that it was a form of capitalism that systematically prioritized political imperatives over economic ones. When given the choice between a course of action that would make capitalism seem like the only possible system and one that would transform it into a viable long-term economic model, neoliberalism consistently chose the former. There is ample reason to believe that eliminating job security and increasing working hours does not result in a more productive (let alone innovative or loyal) workforce. In economic terms, the results have likely been negative, as confirmed by lower growth rates worldwide in the 1980s and 1990s.

However, the neoliberal approach has been effective in depoliticizing labor and narrowing the future. Economically, the expansion of armies, police forces, and private security services constitutes dead weight. It’s possible that the very weight of the apparatus built to ensure the ideological victory of capitalism may eventually sink it. But it’s clear that part of the neoliberal project has been to choke off any sense of an inevitable, redemptive future that could differ from the world we live in.

By the 1960s, conservative political forces were becoming increasingly anxious about the socially disruptive effects of technological progress, and employers were beginning to worry about the economic impact of mechanization. The fading Soviet threat allowed for a reallocation of resources away from projects that could challenge social and economic arrangements and toward efforts that would reverse the gains of progressive social movements and achieve victory in the global class war. This shift was framed as a retreat from Big Government and a return to the free market, but in reality, government-directed research simply moved from programs like NASA and alternative energy sources to military, information, and medical technologies.

This doesn’t explain everything, however. It doesn’t explain why, even in areas where well-funded research has focused, we haven’t seen the kinds of advances anticipated fifty years ago. If 95% of robotics research has been funded by the military, then where are the killer robots shooting death rays from their eyes?

Military technology has advanced, but with notable limitations. One reason we survived the Cold War is that, while nuclear bombs might have functioned as advertised, their delivery systems were not accurate enough to target cities, let alone specific targets within them. As a result, launching a nuclear first strike was not feasible unless the goal was to destroy the entire world.

Modern cruise missiles are more accurate, but precision weapons have still failed to assassinate specific individuals (Saddam, Osama, Qaddafi), even after hundreds were deployed. And despite significant investment, ray guns have not materialized. The Pentagon has likely spent billions on ray gun research, but the closest they’ve come are lasers that might blind an enemy gunner if aimed directly at their eyes. This is pathetic, given that lasers are a 1950s technology. Phasers that can be set to stun are not even on the drawing board, and for infantry combat, the weapon of choice remains the AK-47, a Soviet design from 1947.

The Internet is often touted as a remarkable innovation, but in reality, it is a super-fast, globally accessible combination of a library, post office, and mail-order catalog. Had the Internet been described to a science fiction aficionado in the 1950s and 60s as the most dramatic technological achievement of their time, their reaction would likely have been disappointment. Fifty years on, and this is the best our scientists have managed? We expected computers that could think!

Overall, research funding has increased dramatically since the 1970s. The corporate sector has increased its share of that funding to the point where private enterprise now funds twice as much research as the government. However, the total amount of government research funding in real terms is still higher than it was in the 1960s. Basic, curiosity-driven research—the kind that is not driven by immediate practical applications and is most likely to lead to unexpected breakthroughs—makes up a shrinking proportion of the total. Yet, so much money is being thrown around that overall levels of basic research funding have increased.

Despite this, most observers agree that the results have been disappointing. We no longer see the same continual stream of conceptual revolutions—such as genetic inheritance, relativity, psychoanalysis, or quantum mechanics—that people had come to expect a century ago. Why?

One reason is the concentration of resources on a few massive projects, or "big science," as it’s now called. The Human Genome Project is often cited as an example. After spending nearly three billion dollars and employing thousands of scientists across five countries, the project has mainly established that sequencing genes offers less useful information than initially hoped. The hype and political investment surrounding such projects demonstrate the extent to which even basic research has been co-opted by political, administrative, and marketing imperatives, making it unlikely that anything truly revolutionary will occur.

Our fascination with the mythic origins of Silicon Valley and the Internet has blinded us to what’s really going on. It allows us to imagine that research and development are primarily driven by small teams of plucky entrepreneurs or decentralized cooperation, as seen in open-source software. This is not the case, even though such research teams are more likely to produce results. Research and development are still driven by large bureaucratic projects. What has changed is the bureaucratic culture. The increasing interconnection of government, universities, and private firms has led everyone to adopt the language, sensibilities, and organizational forms of the corporate world. While this may help create marketable products, it has had catastrophic consequences for fostering original research.

My own knowledge comes from universities in the United States and Britain. In both countries, the last thirty years have seen an explosion in the amount of time spent on administrative tasks at the expense of everything else. At my university, for example, we have more administrators than faculty members, and faculty are expected to spend at least as much time on administration as on teaching and research combined. This is more or less the case at universities worldwide.

The growth of administrative work has resulted directly from the introduction of corporate management techniques, which are invariably justified as ways of increasing efficiency and fostering competition. In practice, this means that most people end up spending their time selling things—grant proposals, book proposals, assessments of student and colleague work, prospectuses for new majors, and so on. Universities themselves have become brands to be marketed to prospective students and contributors.

As marketing overtakes university life, it generates documents promoting imagination and creativity that are designed to strangle both in the cradle. No major new works of social theory have emerged in the United States in the last thirty years. Instead, we have become like medieval scholastics, endlessly annotating French theory from the 1970s, all while knowing that if new incarnations of Gilles Deleuze, Michel Foucault, or Pierre Bourdieu were to appear in today’s academy, we would likely deny them tenure.

There was a time when academia was a refuge for the eccentric, brilliant, and impractical. Not anymore. It is now the domain of professional self-marketers. As a result, in one of the most bizarre fits of social self-destruction in history, we seem to have decided that there is no place for our eccentric, brilliant, and impractical citizens. Most languish in their mothers’ basements, occasionally making sharp interventions on the Internet.

If this is the case in the social sciences, where research is carried out with minimal overhead and largely by individuals, imagine how much worse it is for astrophysicists. Indeed, astrophysicist Jonathan Katz recently warned students considering a career in science that even after the usual decade of being someone else’s flunky, they could expect their best ideas to be stymied at every turn:

“You will spend your time writing proposals rather than doing research. Worse, because your proposals are judged by your competitors, you cannot follow your curiosity but must instead anticipate and deflect criticism rather than solve important scientific problems. It is proverbial that original ideas are the kiss of death for a proposal, because they have not yet been proven to work.”

That explains why we don’t have teleportation devices or anti-gravity shoes. Common sense suggests that to maximize scientific creativity, you should find some bright people, give them the resources they need to pursue whatever ideas come into their heads, and then leave them alone. Most ideas will come to nothing, but a few might lead to significant discoveries. However, if you want to minimize the chance of unexpected breakthroughs, force those same people to spend their time competing to convince you that they already know what they’re going to discover.

In the natural sciences, the tyranny of managerialism is compounded by the privatization of research results. As economist David Harvie reminds us, "open source" research is not a new concept. Scholarly research has always been open source in the sense that scholars share materials and results. There is competition, but it is "convivial." This is no longer true for scientists in the corporate sector, where findings are closely guarded. Moreover, the corporate ethos has spread into academia and research institutes, causing even publicly funded scholars to treat their findings as personal property. Academic publishers also play a role, making published findings increasingly difficult to access, further enclosing the intellectual commons. As a result, convivial, open-source competition has transformed into classic market competition.

There are many forms of privatization, including the simple buying up and suppression of inconvenient discoveries by large corporations fearful of their economic impact. We don’t know how many synthetic fuel formulas have been bought up and locked away by oil companies, but it’s hard to imagine that nothing of this sort has occurred. More subtle is the way the managerial ethos discourages anything adventurous or unconventional, especially if there is no prospect of immediate results. The Internet can even exacerbate this problem. As Neal Stephenson put it:

"Most people who work in corporations or academia have witnessed something like the following: A number of engineers are sitting together in a room, bouncing ideas off each other. Out of the discussion emerges a new concept that seems promising. Then some laptop-wielding person in the corner, having performed a quick Google search, announces that this ‘new’ idea is, in fact, an old one; it—or something similar—has already been tried. If it failed, no manager who wants to keep their job will approve spending money on it. If it succeeded, it’s patented, and entry to the market is presumed unattainable due to ‘first-mover advantage’ and ‘barriers to entry.’ The number of seemingly promising ideas crushed this way must number in the millions."

Thus, a timid, bureaucratic spirit now pervades every aspect of cultural life. It is draped in the language of creativity, initiative, and entrepreneurialism, but the language is meaningless. The thinkers most likely to make conceptual breakthroughs are the least likely to receive funding, and even if breakthroughs occur, it is unlikely anyone will pursue their most daring implications.

Giovanni Arrighi noted that after the South Sea Bubble, British capitalism largely abandoned the corporate form. By the time of the Industrial Revolution, Britain relied on a combination of high finance and small family firms—a pattern that held through the next century, the period of greatest scientific and technological innovation. (Britain at that time was also known for its generosity toward eccentrics and oddballs, much as contemporary America is intolerant of them. It was common for them to become rural vicars, who, predictably, became a major source of amateur scientific discoveries.)

Contemporary bureaucratic corporate capitalism was not born in Britain but in the United States and Germany, the two rival powers that fought two bloody wars in the first half of the twentieth century to determine who would replace Britain as the dominant world power. These wars culminated, fittingly enough, in government-sponsored scientific programs to see who would be the first to discover the atom bomb. It is significant, then, that our current technological stagnation seems to have begun after 1945, when the United States took over from Britain as the organizer of the global economy.

Americans don’t like to think of themselves as a nation of bureaucrats—quite the opposite—but if we stop imagining bureaucracy as something confined to government offices, it becomes clear that this is exactly what we’ve become. The final victory over the Soviet Union did not lead to the triumph of the market but rather cemented the dominance of conservative managerial elites and corporate bureaucrats who use short-term, competitive, bottom-line thinking to suppress anything that might have revolutionary implications.

If we fail to see that we live in a bureaucratic society, it’s because bureaucratic norms and practices have become so pervasive that we can’t even imagine doing things any other way.

Computers have played a crucial role in narrowing our social imaginations. Just as the invention of new industrial automation methods in the 18th and 19th centuries paradoxically turned more people into full-time industrial workers, today’s software, which is supposed to save us from administrative responsibilities, has turned us into part- or full-time administrators. In the same way that university professors now expect to spend more time managing grants, affluent housewives have come to accept that they will spend weeks each year filling out forty-page online forms to get their children into schools. We all spend increasing amounts of time punching passwords into phones to manage bank accounts, pay bills, and perform tasks once handled by travel agents, brokers, and accountants.

Someone once calculated that the average American will spend a cumulative six months of their life waiting for traffic lights to change. I don’t know if similar calculations exist for time spent filling out forms, but it must be at least as long. No population in human history has spent as much time on paperwork.

In this final, stultifying stage of capitalism, we are moving from poetic technologies to bureaucratic technologies. By poetic technologies, I mean the use of rational and technical means to bring wild fantasies to life. Poetic technologies are as old as civilization itself. Lewis Mumford noted that the first complex machines were made of people. Egyptian pharaohs built the pyramids using mastery of administrative procedures, developing production-line techniques to divide complex tasks into simple operations, each assigned to a different team—even though they lacked mechanical technology beyond inclined planes and levers. Administrative oversight turned armies of peasant farmers into the cogs of a vast machine. Much later, after mechanical cogs were invented, the design of complex machinery elaborated on principles originally developed to organize people.

Machines—whether powered by human arms and torsos or by pistons, wheels, and springs—have been used to realize impossible fantasies: cathedrals, moon landings, and transcontinental railways. Certainly, poetic technologies often carried a dark side—the "poetry" of the dark satanic mills as much as of grace or liberation. But rational administrative techniques were always in service of some grand, fantastic end.

From this perspective, the grand Soviet plans—even if never realized—represented the climax of poetic technologies. What we have now is the reverse. Vision, creativity, and mad fantasies are still encouraged, but they remain free-floating, without any pretense that they could ever take form or substance. The most powerful nation in history has spent the last few decades telling its citizens that they can no longer contemplate fantastic collective enterprises, even though the environmental crisis demands it.

What are the political implications of this? First, we need to rethink some of our most basic assumptions about capitalism. One is that capitalism is synonymous with the market, and therefore antithetical to bureaucracy, which is supposedly the domain of the state.

The second assumption is that capitalism is inherently technologically progressive. Marx and Engels, in their enthusiasm for the industrial revolutions of their time, were wrong about this. Or, to be more precise: they were right to insist that the mechanization of industrial production would destroy capitalism, but they were wrong to assume that market competition would compel factory owners to mechanize anyway. If it didn’t happen, it’s because market competition is not as essential to the nature of capitalism as they had assumed. Today’s form of capitalism, in which much competition takes the form of internal marketing within large semi-monopolistic bureaucracies, would come as a surprise to them.

Defenders of capitalism make three broad historical claims: first, that it has fostered rapid scientific and technological growth; second, that while it throws enormous wealth to a small minority, it does so in ways that increase overall prosperity; and third, that it creates a more secure and democratic world for everyone. It’s clear that capitalism is no longer doing any of these things. In fact, many of its defenders are retreating from the argument that it’s a good system and instead falling back on the claim that it’s the only possible system—or at least the only one viable for a complex, technologically sophisticated society like ours.

But how could anyone argue that current economic arrangements are the only ones that will ever be viable under any conceivable future technological society? The argument is absurd. How could anyone possibly know?

There are people who hold that position—on both ends of the political spectrum. As an anthropologist and anarchist, I meet anticivilizational thinkers who insist that not only does modern industrial technology lead to capitalist-style oppression, but that this will inevitably be true of any future technology as well. Therefore, they argue, human liberation can only be achieved by returning to a Stone Age existence. Most of us, however, are not technological determinists.

But claims for capitalism’s inevitability must rely on a kind of technological determinism. If neoliberal capitalism aims to create a world in which no one believes any other economic system could work, then it must suppress not just any vision of an inevitable redemptive future, but also any radically different technological future. Yet here’s the contradiction: defenders of capitalism cannot argue that technological change has ended, because that would mean capitalism is no longer progressive. Instead, they try to convince us that technological progress is continuing and that we live in a world of wonders. But these wonders take the form of modest improvements (the latest iPhone!), rumors of inventions on the horizon ("I hear they’re going to have flying cars soon"), complex ways of juggling information and imagery, and ever more complicated platforms for filling out forms.

I don’t mean to suggest that neoliberal capitalism—or any other system—can succeed in this. First, there’s the challenge of convincing the world that you’re leading in technological progress while actively holding it back. The United States, with its decaying infrastructure, paralysis in the face of global warming, and symbolic abandonment of its manned space program just as China accelerates its own, is doing an especially poor job of this. Second, the pace of change can’t be slowed forever. Breakthroughs will occur, and inconvenient discoveries can’t be permanently suppressed. Other, less bureaucratized parts of the world—or at least places with bureaucracies more open to creative thinking—will inevitably acquire the resources needed to pick up where the U.S. and its allies left off. The Internet does offer opportunities for collaboration and dissemination that might help break through the wall as well. Where the breakthrough will come, we can’t know. Maybe 3D printing will accomplish what robot factories were supposed to, or maybe it will be something else. But it will happen.

There is one conclusion we can feel especially confident about: it won’t happen within the framework of contemporary corporate capitalism—or any form of capitalism. To begin setting up domes on Mars, let alone to develop the means to contact alien civilizations, we’ll need a different economic system. Must this new system be some massive bureaucracy? Why assume it must? We’ll only begin by breaking up existing bureaucratic structures. And if we are to invent robots that do our laundry and clean our kitchens, we’ll have to ensure that whatever replaces capitalism is based on a far more egalitarian distribution of wealth and power—one that no longer includes the super-rich or the desperately poor willing to do others’ housework. Only then will technology begin to serve human needs. That is the best reason to break free of the dead hand of hedge fund managers and CEOs—to free our fantasies from the screens in which they have imprisoned them, and to allow our imaginations to once again become a material force in human history.